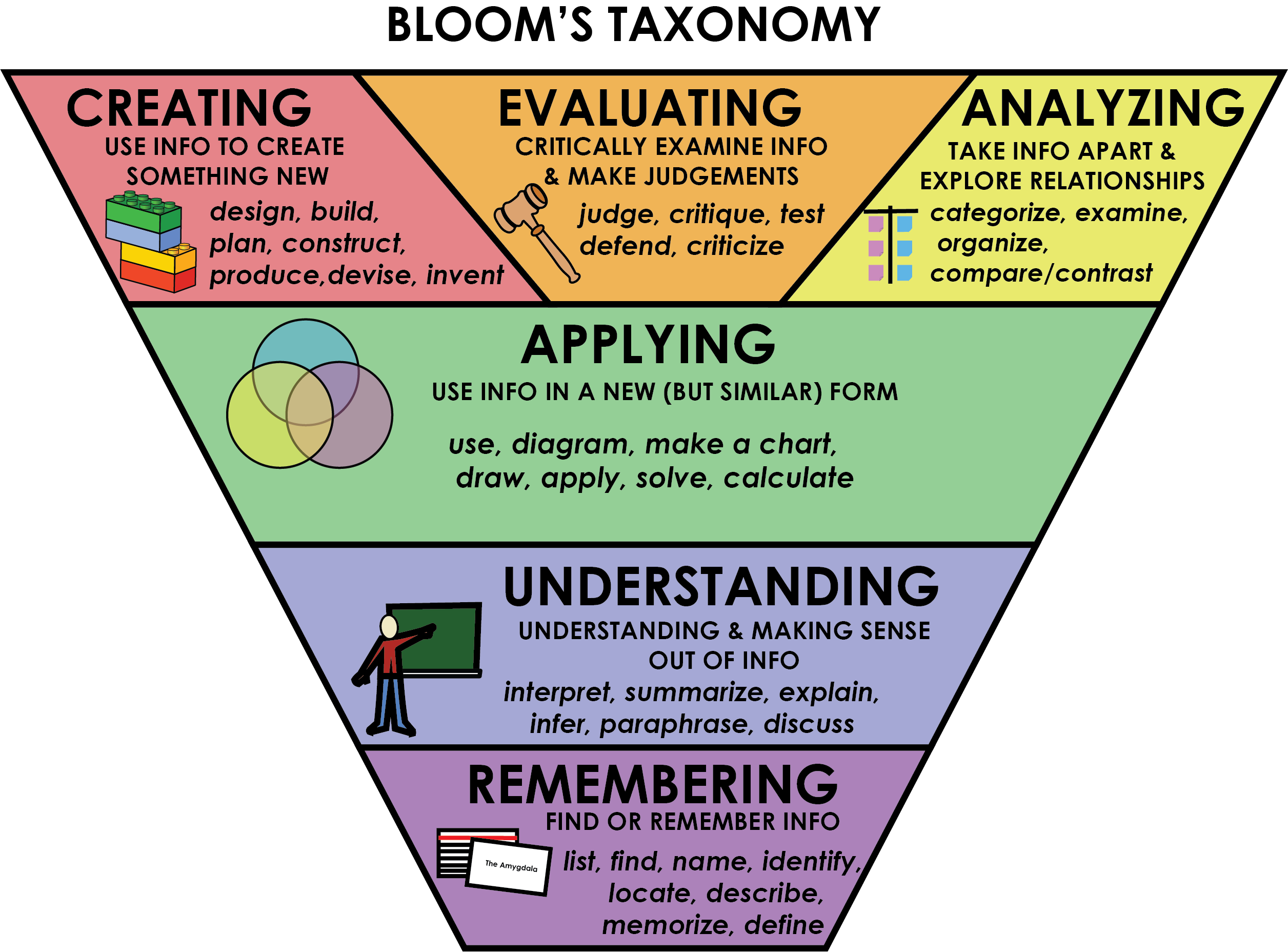

This past year, I have experimented with Moodle-based exams. This is a holdover from the remote instruction that I, along with basically everyone else, was forced to do during the COVID-19 pandemic. Personally, I am not a fan of traditional scantron-based multiple choice exams, preferring long answer. My motivation for this is based on the feeling that multiple choice exams test students’ ability to recognize a correct answer and/or use process of elimination to narrow down the choices to those that are most probable. Long answer exams, in contrast, require students to write detailed solutions and explain/justify their work in words – tasks which engage some of the “higher-order” domains of Bloom’s Taxonomy1.

Despite my preference for long-answer exams, their implementation in courses of several hundred is difficult as the grading quickly becomes intractable. While some physics departments include the needed grading resources to grade several hundred long-answer exams into the TA workload allotment, UMass Amherst’s Physics Department does not by custom. My solution in the past has been to do a hybrid exam: 10ish multiple choice questions and then two long answer. Typically, one of these long answer questions would be a traditional “solve the algebra” while the other would require students to explain a physics phenomenon in words. While this worked in terms of respecting the TA workload, there were still challenges. The primary issue was turn around time. I felt that giving the TAs two weekends to complete the grading was the minimum. As such, a minimum of two weeks would pass before students would get their full exams back. Two weeks in my courses is typically a full unit out of the five in the course. After such a long turn around time, students had moved on and did not get as much learning out of the exam feedback as the would have if the exams had been returned more promptly.

Moodle-based exams, by contrast, allow for grades to be released immediately if the instructor so desires (I typically take a few days for review the scores to look for problematic questions etc.). In my mind, the pedagogical benefit of this immediate feedback compensates for the losses arising from a lack of long-answer questions particularly when the variety of auto-graded question types provided by Moodle is considered.

In contrast to paper scantrons, which are limited to five-choice multiple choice questions, Moodle allows for: unlimited numbers of choices, multiple select, entering numbers, fill in the blank (via drop down or string matching), and placing markers on images. In the case of physics, these question types can be quite useful. Questions which require students to enter a number are, of course, obviously applicable. The unlimited choices are also useful as all the possible options, for say the direction of a vector, can be available. With a little creativity, the other question types can also be employed. For example, questions which require students to place a marker on an image can be useful for ray diagrams; the configuration of lenses can be superimposed on a grid which is printed and given to students which they can use to solve the problem. Once students have figured out the location of all intermediate images and the final image, they can use the grid to place markers in the correct location on the screen.

Instructors can also include videos or animations in the exam questions. This can be useful to clarify questions that are difficult to word in an unambiguous way. Videos can also provide a connection between the exam and very real phenomena. A question can include a video of, for example, a demonstration which the students then need to explain as part of answering the question.

Beyond the student experience of the exam, Moodle provides other advantages. Students who need to be away on exam day due to minor illness or participation in a University sanctioned event, can take the exam remotely. The experience that almost all instructors have been forced to develop over the course of the COVID-19 pandemic makes this relatively straight forward, particularly considering the small fraction of the students who will need such accommodations. Also, Moodle makes it easy to, over time, build up a nice question bank of problems. Tags can be used to keep track of the content of each problem as well as to record the last time a problem was used.

Of course, exam integrity is always a key concern when considering computer-based exams. The proctored environment is one key. Another important step to maintaining exam integrity is to remove any need for students to switch windows: provide printed equation sheets and require external calculators, for example. There are also several other features one can build into the exam which help promote exam integrity which, for obvious reasons, I do not want to share publicly. If you are an instructor and would like to hear some of these tips, please do not hesitate to reach out to me.

In summary, Moodle (and other LMS systems) provide a nice format for hosting exams in large enrollment courses. Such exams are, in my opinion, still inferior to full long-answer paper-based exams. However, such exams can be difficult to implement for large enrollment courses and generally result in very delayed feedback which we know to limit the educational value of examinations. Moodle provides a nice middle-ground between sophisticated questions and rapid feedback. In addition, such systems provide the instructor additional benefits reducing the need for makeups and maintaining lists of questions.

- I acknowledge the limitations of Bloom’s Taxonomy particularly in terms of thinking about levels, but it is a useful framework for conversation.